I began my internship at DataWorks NC with a vision of somehow bringing the strengths of data science to the world of community organizing and non-profit work. I wanted to use the rigorous data analysis techniques I am learning at Duke to add to the local conversation around chronic stress and health.

I planned to do this by using machine learning techniques to cluster neighborhoods into similar groups and create a statistical model to look at the impact evictions have on neighborhood diabetes rates for different types of neighborhoods.

It didn’t work.

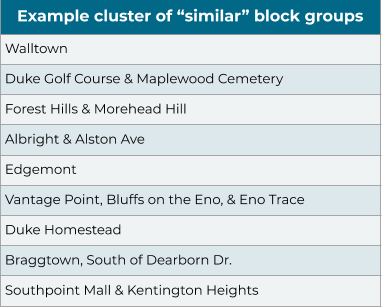

The clusters that resulted from the machine learning algorithms do not make much logical sense in the real world context. Anyone who lives in Durham and looks at the clustered groups, knows that the groupings are not very similar in terms of the people who live there and the lives they live. Therefore, it is unclear how these groups, even if they are found to be statistically significant, could be interpreted as similar or meaningful for community work.

The list below represents block groups in one of the clusters found.

Why Data Science Projects Fail in the Community Organizing Space

This summer, I saw my approach mirror the many ways in which well-intentioned, bright, and creative minds continually get it wrong. Not because they don’t understand the math. Not because they don’t know how to clean or visualize the data. They fail, as I initially failed, because they do not understand the data the organization needs to operate in the context of their work.

DataWorks NC’s mission is to democratize data and facilitate community dialogue. They bring data and information to the community, so that individuals and neighborhoods can interpret and use the data in the ways they see best fit. As a local public health practitioner who has primarily used data to provide recommendations to executive leadership, this was a very difficult part of my work to practice. I did not understand how to use my tools in this context.

Recognizing and Connecting with the Complexity of Community Work

Data science has a way of giving an illusion of certainty. In school, we as data scientists are taught all the ways we can mathematically show as much of it as possible. We pare down and consolidate huge amounts of data, wrangling them like a wild animal until they fall into line and into a form that is neat and orderly. But in community organizing work, the data are all about the people. They describe the lives they live and the factors that impact their ability to thrive. But much of their life stories will not be found in the data we currently have.

The stories of people are messy; their lives are more complex than the few data points that record a version of their lives in data. But much of the knowledge in this work lives outside of a cleaned dataset. In the search for certainty, we as data scientists often clean out much of this complexity, and in the process lose a lot of context, missing the true narrative.

The Reality is That Algorithms are Only as Good as the Purpose They Serve

In my summer work, I did all kinds of math in an attempt to delete bias or assumptions that could impact the certainty and purity of my results. However, when that failed, I ended up using a combination of google maps and community-created neighborhood boundaries to inform my work. In this case, understanding the data as places, with people and different stories, was more important than knowing the exact numbers and census-generated identifying features.

Local history and policies shape the neighborhoods of Durham in ways not obvious from my data; for instance, Braggtown, was a separate, unincorporated community until 1957, when it became a part of Durham. While this neighborhood has a strong identity, it is divided across several block groups in my data. By looking more closely at the dataset as places rather than identifiers, I was able to locate the outliers and areas where small or divided numbers were causing exaggerated rates.

With revisions based on community knowledge from websites such as Durham Hoods and Open Durham, I ran the analysis a second time. These revisions provided significant results. Once I eliminated the perspective of mathematically-generated clusters, the relationships between chronic disease outcomes and evictions became clear. Specifically, the revised models demonstrated that neighborhoods with higher eviction rates and higher uninsured rates also had higher rates of poorly managed diabetes.

Rethinking Certainty by Using Data to Start the Conversation, Not to End It

It is scary to me as a future data scientist to know how easy it is to let models stand as truths, without being checked by cultural reality. Rather than bringing the tools and a solution to DataWorks NC, I find myself leaving my internship with the seed of an idea: the idea that the data science community needs to think outside of certainty. As ethical data scientists, it is important that we understand that the way we communicate findings has real consequences.

Data science as a field would benefit from finding ways to appreciate the complexity of the lives data describe, and connect with them in a meaningful way. If nothing else, we must acknowledge the limitations of the data we have and recognize the community as the experts in these stories. Community members, organizers, and non-profits fight for a place to be heard, so their knowledge can be shared and understood.

I ask my fellow data scientists, do our data processes have the capacity to pick up on this community intelligence? Do the data that we work so hard to wrangle illuminate these voices?

Messy data can be scrubbed into tidy models. But a strong model is not the same thing as a true model; this comes with an in-depth understanding of the data. The wisdom of these truths lies with the people; and the knowledge of people is an asset we are still learning to harness.